Texas researchers have developed a device that can read a person’s thoughts from a distance.

“We manage to understand what the person means, not exactly what they are saying,” explained Alexander Huth, a neuroscientist at the University of Texas at Austin, during a press conference last Thursday.

That’s a far cry from telepathy, however: to “read” a volunteer’s mind, the volunteer must train Dr. Huth’s software, whose study was published Monday in Nature Neurosciences.

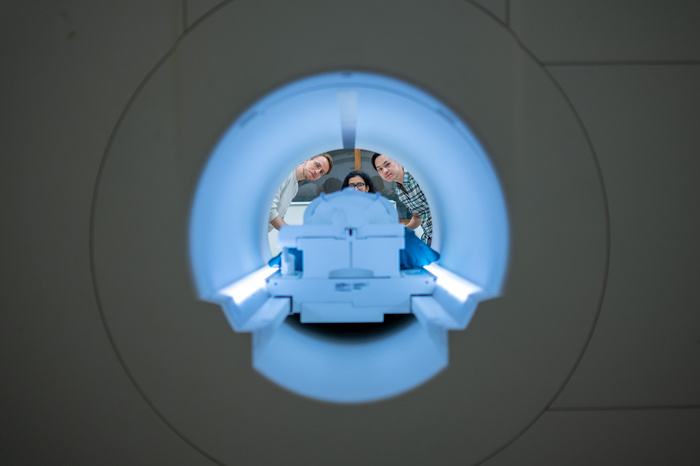

Until now, devices for reading minds in the laboratory had to have electrodes attached to the skull of guinea pigs. The Texas team used a remote blood flow measurement called “functional magnetic resonance” or fMRI. This data was combined with an artificial intelligence language creation system similar to ChatGPT.

“We saw that thinking uses much larger regions of the brain than speech and hearing,” Huth said. There were areas that are used for orientation, math, even touch. »

The first part of the Texas experience involved having the participant and the program “decoder” listen to 16 hours of episodes of an American public radio show, The Moth, where people tell personal stories in detail. Then, the participant would listen to certain broadcasts again and the “decoder” would attempt to infer, from the fMRI data, what was being said.

“We were surprised at the accuracy,” Huth said. For example, if in The Moth a person said “I didn’t even have my driver’s license back then”, the decoder would translate the fMRI data as “he hadn’t learned to drive yet”. That’s a pretty good paraphrase. »

A second iteration of the experiment consisted in reproducing a story imagined by the participant. And in a third, the participant listened to a video without words telling a story, and the decoder had to describe this story. The success rate was good in both cases as well.

Each of the three people who took part in the experiment had to train the decoder separately. He couldn’t read the mind of someone who hadn’t initially trained him with the 16 hours of episodes of The Moth.

What is the next step ?

“We want to refine the decoder,” Mr. Huth replied. But we already have to think about the ethical implications of our technology. Eventually, fMRIs will become more sensitive and could capture sufficiently precise data at a distance of one or two meters, instead of about ten centimeters currently. And it could be that we manage to have a universal decoder that does not depend on the cooperation of one person to train the algorithm. Inappropriate applications are numerous. »

The objective of the researchers would rather be to allow aphasics and paralytics to communicate with the outside world.